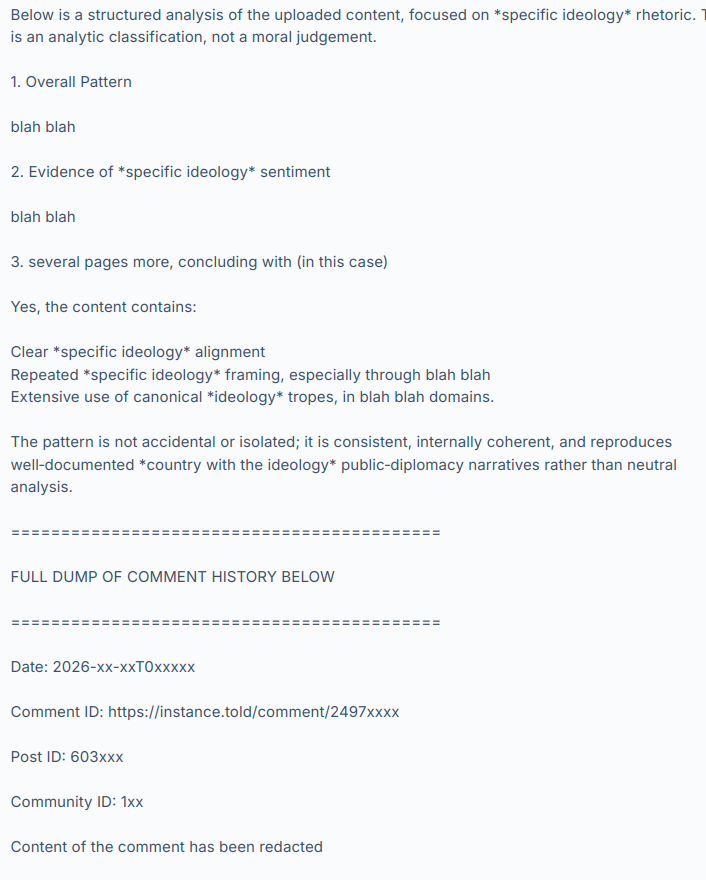

I recently discovered that some popular federated instances have been using LLM-assisted moderation tooling that evaluates whether someone has said something bannable. They do this by running a script/app that sends the user’s comment history to OpenAI with the question “analyze this content for evidence of specific political ideology sentiment. Also identify any related political ideology tropes“. (The italic bits are where I’ve redacted the ideology they’re seeking).

OpenAI’s LLM (they’re using GPT-5.3-mini) then responds with something like:

and so on, hundreds of comments.

I have not named the instances or people involved, to give them time to consider the results of this discussion, make any corrective changes they want and disclose their practices at their own pace and in their own way. I have also redacted the evidence to avoid personal attacks and dogpiling. Let’s focus on the system, not the individuals involved. Today these instances and people are using it and maybe we’re ok with that because it’s being used by groups we agree with but what if people we strongly disagree with used it on their instances tomorrow?

The use and existence of this tooling raises a lot of other questions too.

What are the risks? Fedi moderators are often unsupervised, untrained volunteers and these are powerful tools.

What safeguards do we need?

Would asking a LLM “please evaluate this person’s political opinions” give different results than “find evidence we can use to ban them” (as used in the cases I’ve seen)?

What are our transparency expectations?

Is this acceptable and normal?

Should this tooling be disclosed? (it was not – should it have been?)

If you were given a choice, would you have opted out of it?

Can we opt out?

Are there GDPR implications? Privacy implications? Should these tools be described in a privacy policy?

Are private messages being scanned and sent to OpenAI?

How long should these assessments be retained and can we request to see it, or ask for it to be deleted?

Once the user’s comments are sent to OpenAI, is it used to train their models?

What will the effect be on our discourse and culture if people know they are being politically profiled?

Where are the lines between normal moderation assistance tools, political profiling and opaque 3rd-party data processing?

I hope that by chewing over these questions we can begin to establish some norms and expectations around this technology. The fediverse doesn’t have any centralized enforcement so we need discussions like this to develop an awareness of what people want in terms of disclosure, privacy, consent and acceptable use. Then people can make choices about which instances they join and which ones they interact with remotely.

And of course there are the other issues with LLMs relating to environmental sustainability, erosion of worker’s rights, increasing the cost of living and on and on. I can’t see PieFed adding any functionality like this anytime soon. But it’s happening out there anyway so now we need to talk about it.

What do you make of this?

I don’t like this happening, and there should be transparency in all moderation decisions, but some of these points make no sense.

There is essentially no expectation of privacy on threadiverse platforms. Everything is public and probably already being used to train models.

There is no private messaging system. Direct messages are unencrypted and potentially visible to any instance admins. They and should not be used to share anything sensitive.

It’s occasionally worth calling out that votes are also public. I think twice before hitting those buttons

Why would you care if anyone knows how you vote on comments?

Not OP, but the votes being public (not only on comments but also on posts) make it really easy for someone with malicious intent to generate a profile on your interests, political and sexual orientation, health/mental issues, addictions and so on. It’s a goldmine of data that should be protected.

This only makes sense if your account contains personally identifiable information. If it doesn’t, then what can really happen?

It’s not that hard to identify people online. My account is definitely not private

Yes, but then you are willingly accepting the risk of posting in a fully public forum anyway. What I’m saying is, you could, if you wanted, not have personally identifiable information on your account.

Thank you for calling this out. I think people assume that since it’s held by private instance owners that the fediverse is secure. I’ve posted this comment many times, that no, the fediverse is quite literally by design open and unencrypted.

A post is literally blasted out to anyone who listens, same with comments, upvotes, downvotes, everything can be saved, stored, and used for whatever anyone who listens wants. It should be completely assumed that nefarious agencies are currently listening and storing everything we do here. This is by design. It’s the tradeoff we have of having an open platform. Anyone can spin up a server, and that means anyone.

DMs are similar, they’re blasted out to the other server. If the server admin of the user in question wants to read them, they can. Lemmy/the fediverse is not a secure messaging platform. That’s why the Lemmy devs literally put a Matrix handle option in the profile, to encourage people to use Matrix instead. A DM on here should be simple, to the point, and if need be, inviting them to speak on something secure.

Edit - As a perfect example of the fact that there should be no expectation of privacy here on Lemmy, as an Admin myself, I can see that @A_normy_mouse has been downvoting all of my comments here. Absolutely everything here is public and visible, even if I weren’t an admin there are tools to view this, regardless of your opinions. It’s imperative that everyone understand this.

Edit 2 OP as well has downvoted me. @[email protected] I’m sorry if you disagree, but it’s irrelevant. Everything you do here can and should be assumed will be used in any way that you disagree with, that is the nature of the fediverse. Mastodon, Pixelfed, Piefed, Lemmy: ActivityPub is an open and unencrypted protocol. Even if it were encrypted, you still put 100% of your trust in your server admin, and beyond that each server admin you are blasting your messages out to.

I’d highly suggest accepting this fact before trying to push for rules. The very nature of the Fediverse is that no one can dictate rules, and to do that the tradeoff quite literally is that everything is open and unecrypted.

Another way to think of this. I run a server myself. I made my own rules and decided how to run it. Now your server starts sending activity to my server. That’s your server’s choice. I didn’t agree to your rules, I may disagree with your rules, but you’re sending your data to my server, of which I have complete and total ownership over. I didn’t click accept on a ToS, I didn’t agree to anything. Hell on my server I could literally have a “By sending me your data you accept that I can do whatever I want with your data”. You sent me your data, I quite literally can do whatever I want. (Personally I won’t, but that’s how you should think of the fediverse)

lol @ Rimu downvoting your post. Be careful he’s probably going to make a hit piece against you next!

You’re hyperfocusing on one point, as if that’s the only part that matters and ignoring all the rest. I don’t consider that helpful, hence the downvote.

What is especially unhelpful is abusing your admin access to call out people’s votes. Leave that shit alone.

That is quite literally my point. Everything, absolutely everything here is open and can be used however any instance owner wants. You can say “leave that shit alone”, but there is no obligation to whatsoever.

You should assume every instance owner can and is viewing all of your private data, sending it through whatever LLM/mod tools they want. Are they? Probably not. But they can, and there is no obligation not to.

Yeah you can do that but now you’re on my do-not-trust list. And probably a few other people’s lists.

I appreciate you being open about your opinions because now I can make an more informed choice about interacting with you and the instance you run.

Don’t you think everyone deserves the information they need to choose which instances they want to interact with, according to whatever criteria is important to them? Even if your criteria are different?

It’s absolutely wild that the obvious needed to be pointed out at all, and that the reaction to it was ‘you just made my list, buddy’.

That’s a stupid take, you’re basically shooting the messenger here.

GOOD. NO ONE should be trusted here! I’m just some guy who decided to spin up a server, there should be zero trust! THIS IS MY POINT.

Don’t you think everyone deserves the information they need to choose which instances they want to interact with, according to whatever criteria is important to them? Even if your criteria are different?

This depends on the trustworthiness of the admin themselves, and even then every admin is just some person who decided to spin up a server, just like me. Trust is built and earned, it shouldn’t be implicit. The option you have is to defederate, or leave and join another server.

I’m really not trying to be an asshole here, but your post is what caused me to do this. This is not a unique post, this is a fundamental core principal of the fediverse that every user must understand. That by being here, it is not a private secure place, you are quite literally blasting every comment, post, and upvote, to whoever wants to listen. Literally everyone. Any semblance of privacy is purely a UI trait. Rules/guidance is purely 100% based on what each server owner chooses.

Stop throwing a tantrum like a child. You ranted. You were explained why your tantrum is pointless. Move on.

And there is no obligation to federate with you. Please don’t publicly discuss users’ votes.

You are correct, and it’s what I was suggesting op do if they don’t like what other admins do. It’s right there.

As for viewing votes there’s a whole site dedicated to showing everyone’s votes, it’s called Lemvotes. Feel free to say please, but people are still going to do it. Votes and everything elsewhere are very much public, best to get used to that now.

As for viewing votes there’s a whole site dedicated to showing everyone’s votes, it’s called Lemvotes

Which is why we’re not federated with it. Pasting lemmy.ml links returns a 404 error.

Great. You show me an 10 foot fence I’ll show you an 11 foot ladder. Go ahead and audit every server that federates with you, send them a questionaire. There’s absolutely nothing stopping the next lemvotes from setting another server up, and there is nothing stopping any three letter agency from setting up their own listening server.

abusing your admin access

Everyone has admin access, including you…

You’re hyperfocusing on one point, as if that’s the only part that matters and ignoring all the rest. I don’t consider that helpful, hence the downvote.

Huh? What exactly are your expectations here, that everybody addresses every point in every comment? You just listed like 2 dozen points of discussion in the op, every comment would be an essay. Scrubbles has a good point that should honestly be foundational to the discussion, and they’re being respectful, so I really don’t understand what your problem is here.

If you really wanted their take on your other points, instead of downvoting you could’ve just asked for it. You know, have a discussion? Or just let it stand alone, it’s still a valid take.

What is especially unhelpful is abusing your admin access to call out people’s votes. Leave that shit alone.

Anyone (anyone) can be an admin of their own instance, there’s absolutely nothing exclusive about it. Hell you don’t even have to go through the work of doing that, there’s other tools. Lemmy/Piefed are super open, by design.

Anyone (anyone) can be an admin of their own instance, there’s absolutely nothing exclusive about it. Hell you don’t even have to go through the work of doing that, there’s other tools. Lemmy/Piefed are super open, by design.

And any admin can ban someone or defederate from someone’s instance for doing it.

Lemmy/Piefed are super open, by design.

If Lemmy could have kept the votes hidden, it would have, but the nature of federation precludes it. So instead is does the best it can to not make them obvious. In the case of lemvotes.org, lemmy.ml is defederated from it so that it doesn’t have access to our votes.

Our instances voted not to defederate from lemvotes, so votes are effectively public.

To expand on standards of transparency in moderation decisions:

Lemmy was built with a public moderation log by design. The ethos of the platform includes accountability through transparency. Every action is recorded and preserved (short of defederation or instance shutdown).

This makes moderation auditable. Mods literally cannot do (much) shady stuff in secret. In essence, moderation policy is discernable from the logs. That’s part of why well-run communities have the rules clearly defined and mods follow their written policy.

If a community/instance wants to make political alignment a moderation offense, they’re free to do so. Many communities/instances are quite explicit about this. If a community wants to make moderation completely arbitrary, they are free to do so. That is somewhat less common, but also not unheard of.

In truth, any community can be designed and moderated in any way whatsoever that the mod chooses.

However, the success of a community depends on the quality of the content and the quality of the moderation. Good content brings people in, but bad moderation drives people out. When the moderation is unfair, it is bad for the health of the community, and ultimately bad for the health of the platform.

It is my experience that transparent moderation, such as announcing changes in policy, techniques, etc., is less work in the long run. It takes a bit of time and attention to roll out changes when they are open for community feedback, but that feedback will come in one way or another. If mods don’t provide a formal outlet, then users will make one. Mods operating opaquely give up their right to have the conversation on their time and terms. They also miss out on the wisdom of the crowd. I’ve been in many situations where community feedback provided a valuable insight or tool to face an obstacle through open discussion about policy.

All that being said, one of the major obstacles to growth of the Threadiverse is the woeful dearth of moderation tools. It’s extremely time intensive to do basic things like identifying alt accounts, vote manipulation, bot behavior etc. It is also subject to a lot of human error. This makes it discouraging for people to moderate. I have heard about tools that use AI to detect CP content and remove it quickly, which I think we can all agree is a good use of the tech. Tools like this are not built into the platform, but cobbled together by volunteer mods and admins to keep the platform safe, legal, and sustainable. If they were built in, then moderation would be far easier (and therefore likely better).

First fo all: I don’t like this either.

There is no private messaging system. Direct messages are unencrypted and potentially visible to any instance admins. They should not be used to share anything sensitive.

Agreed, but that admin is breaking his promise, duty, responsibility (call it what you will) if they then upload these messages to an LLM for evaluation.

I would argue for this being actually illegal, at least under the GDPR.

But that was just one of many potential conflicts @rimu raised. We should concentrate on the real conflicts of LLM comment moderation.

edit: yes, I have actively downvoted all comments I disagree with under this post (and upvoted all I agree with). I don’t usually do it so much, but this post is a sort of opinion polling.

It’s very clear on signup, on the READMEs, even on the DM portal itself, that messages are unencrypted and there is no sense of privacy, and that admins have full visibility and can do what they want with them.

Agreed, but that admin is breaking his promise, duty, responsibility (call it what you will) if they then upload these messages to an LLM for evaluation.

There is no promise, duty, or responsibility that an admin has beyond legal and what they themselves promise. The fediverse is great in that if you disagree with your admin, you are free to leave and choose a different one.

As for GDPR, feel free to argue it, but when it’s claimed at every turn that messaging is unencrypted and basically open, well, I don’t think it’d hold up. It literally says to go use Matrix or something else.

you are free to leave and choose a different one.

I only have that freedom if the admin tells me that they use LLMs in this manner or if they federate with instances that do. At the moment everyone is in the dark.

and it will continue to be. Again, you need to understand this. There are no rules, guidelines or anything that an instance owner needs to follow beyond whatever legal requirements they have in their specific jurisdiction.

So, I guess in your pervalence, you are correct, you do not have that freedom. Even I, as an instance owner, do not have that freedom, because everything I’m typing here is being sent out to as many servers are listening too. By being completely open so that anyone can spin up a server and listen for activity, it literally means that we are open and any server can listen for activity.

Anyone can spin up a server, create some LLM bot, and start replying to anyone they want. That instance can be defederated of course, but that is the only tool. This is what you signed up for, this is the open and free internet. We do not have any walls here.

You’re a fucking AnCap? That explains soooooo much.

Wait, why do you think Rimu’s an AnCap?

Just because you technically CAN doesn’t mean you SHOULD. Respecting other people (by, say, not feeding all their posts directly to the slop machine, and not building scrapers) is kind of a key point of society.

There’s a difference between “out there” and “feel free to scrape and/or use for AI training”.

There may not be /legal/ obligations, but there absolutely are /social/ ones.

– Frost

Sure, but that’s not my point. Social pressures aside, there is no way for instance a to control what instance (or server, or data collector) B does with it. Unless you audit every server federated, there is no way to know if anyone is doing anything with your data.

3 letter agencies can and may even already have servers that look like ordinary fediverse servers already just happily listening and storing everything. No amount of social pressure here is going to stop that. So all users need to understand this about the fediverse, that anyone can do whatever they want with your data whenever. For privacy, go to Matrix.

I recently discovered that some popular federated instances have been using LLM-assisted moderation tooling

First of all, I agree with your main point, that this* is problematic, wrong even (and should not happen).

But I need to ask: how did you find out? Is this something that could be traced objectively, or did some people report/admit it?

Are they uploading stuff to corporate LLMs, i.e. LLMs that do not run themselves? (I think you answered this already when you wrote OpenAI, but I want this spelled out)

Are only admins or also mods doing this? That would make a big difference.

I’m also a little unclear about the process: are they uploading (copy-pasting) the actual comments, or links to them? To what extent can all this be automated on Lemmy/Piefed etc.? I.e., are there admin tools that just spit out all of a user’s content?

* again: specific political profiling outsourced to LLMs. OTOH we already have instances that do this manually.

But imo the process is deplorable even if they use LLMs with different prompts for modding.It can be traced objectively.

It’s mods.

They’re using software that they made to do this quickly and easily, it’s not a manual copy and paste situation.

It can be traced objectively.

Can you elaborate?

Also, in another comment you said it was admins, not mods? Or both?

It’s at least one admin, we don’t know how widely it’s used. It does have the logo of a group of 3 instances (Fediverse Anarchist Flotilla), so it seems to be made to be used by many people in an ongoing way.

More details at: https://piefed.social/c/[email protected]/p/2035379/proof-of-ai-assisted-political-profiling-by-unruffled-lemmy-dbzer0-com

I.e. “I just saw a modlog entry, jumped to conclusions, didn’t ask for clarification, didn’t speak to anyone from that instance, jumped straight into making a drama post”

Nah. I discussed it with Unruffled days ago. Here’s a screenshot from Matrix in the Threadiverse Admins room:

motherfucker, if you try to play pretend as an investigative journalist, you’re supposed to ask for an official statement on your final text. In your case you had even had an earlier reply which partially addressed some of your points, didn’t ask for any clarifications and then anyway decided not to include even that reply because it conflicted with the narrative in your hit piece. This is so egregious it’s leaving me speechless!

looks like somebody got blocked and doesn’t have their comments show up on piefed.social anymore…

This is the person calling you a tankie. Someone so afraid of words that they need a hallucinating robot to hold their hand and confirm that everything is a secret plot against them. The absolute only way I could see this being useful is for something like trying to sniff out if a Lemmy.world mod account is a leftist infiltrator or not. Someone who had a different opinion on a current event.

You could maybe run a speech pattern comparison but that’s it. For everything else you just made Stupid Reddit and the purpose of their forum is to feed training data to ChatGPT so that it can profile Fediverse users.

This is the kind of shit dystopian novels are made out of. So angry about people calling out actions you built a tool to analyze why they did it, so you can purge users from your digital kingdom.

I for one welcome flat.world and Piefed showing their true intentions. Digital colonization of activitypub and removal of the people who helped to built it. They didn’t want to leave reddit, they wanted to be reddit. This is some Spez shit.

Maybe in 2 weeks Piefed will hard code that anyone Rimu has tagged for disagreeing with them mild criticism to be unable to make accounts or federate posts with a false error code.

Lmaoooooo wait, did you think this was PieFed introducing an AI-assisted moderation tool? Rushing to comment too fast and this was your take?

Your silence speaks volumes. What a faceplant! Ahahahaha

Does @[email protected] know you think they’re:

Someone so afraid of words that they need a hallucinating robot to hold their hand and confirm that everything is a secret plot against them.

So angry about people calling out actions you built a tool to analyze why they did it, so you can purge users from your digital kingdom.

inb4 if you don’t like being modded by AI start your own instance

Personally I’m shocked that this isn’t more prevalent.

Reddit was already hard enough to moderate without AI tools. Now in the year of 2026 with what amounts to entirely volunteer-based “companies” or non-for-profits(atleast) running Lemmy instances for us for free you have to get AI help for moderating.

I’ve been working on a competitor to the activity pub protocol and I have a ready-made solution called userless and the only reason I’ve never deployed a demo server for other people to test and interact with is because I have no idea how I would moderate it! That’s encouraged me to work on the peer-to-peer version of the protocol so I don’t have to moderate it at all but still this isn’t easy.

And to address the privacy concerns about who is moderating you… This is the public internet, your data is shared because you share the data. How can you expect privacy in public.

Reddit was already hard enough to moderate without AI tools.

…It’s really not. I moderate a few subreddits (a sin, I know, but I’m here too). It’s fine. You just wait for people to report shit and look around a bit yourself, same as 2016, same as 2010. Get a handful of people doing that together, or a bigger handful for bigger subs, set up some basic automod stuff for frequent spam links or slurs or such, and it’ll be fine.

You talk about instances utilizing this tooling, but in your comments you admit it’s just some mods. This is misleading, as talking about instances doing it assumes admin access and relevant instance policy, something which invites calls for defederation (as can be clearly be seen from the comments in your post).

A random mod doing something is not the same as an instance doing it. Literally anyone can be a mod and they don’t get any more access than an anonymous account by doing so.

This is the second time in one week I see you throwing careless statements like chum in the water. I can’t help but notice a pattern emerging.

Ahh sorry - I just checked more closely. It’s an admin doing it.

And your recent post showed that there’s no such LLM-based admin tooling and you just misrepresent what the tool that is there, does…

I’m mostly just surprised that a mod would pay for tokens to moderate. The Fediverse is radically public by design, so I don’t have any expectation of privacy. I’d bet at least someone is gobbling up the entire Fediverse to train AI, since companies are so desperate for new human-generated data.

It was a local model.

Their whole thing is that they’re running local models on their own systems, so it is unlikely to be corporate at all.

Now, there is a portion of lemmy instances and piefed that did incorporate, and they seem to have it out for db0.

The use of AI for moderation isn’t the choice of users, but moderators and admins.

I disagree: ultimately it can be, if users choose instances that defederate from those who allow their kids to use AI tools. (Autocorrect changed “mods” to “kids”, but I think I will leave it that way bc it’s funnier 😜)

Realllllly keen on that defederation?? Why not talk with the admins of that instance first?? Hmmm … almost like there is an agenda at play here…

I’m not surprised. Lemmy has been sold as a Reddit where people can be in more control than they could be on Reddit. It turns out you only get that control if you’re an admin.

I think that should have been obvious to anyone with even a cursory understanding of how websites and forums work.

I think LLMs could be useful tools for moderation, you might even can get away with smaller models for it, but I don’t think people should be outsourcing them to big corpos, due to ability to manipulate the models.

I agree, we need our own servers with local AI models for fediversе.

AI horde. Local models. Crowdsourced. Distributed. FOSS.

As for the privacy issue:

The only counter to this is to move to a closed end-to-end encrypted groupchat so it can’t be mass LLM analyzed…

If you want a public forum, well… its public…

You can’t stop a script from just grabbing all the posts/comments… and its also federated, so the bot only needs to be able to access one instance and get it…

I mean they could simply just set up their own instance and pretend its just a benigh single-user instance… like what are you gonna do, defed all small instances preemptively? Use “login walls” to make the forum private? And somehow trust all other admins that are federated and make them also enforce a “login wall” policy?

Its a PUBLIC forum…

the only solution for privacy is a groupchat and only let in people that can keep a promise to not screenshot everything and give it to a LLM.