That’s particularly useful for pancreatic cancer, if it’s accurate, reliable, cost effective, and practical in the real world.

In other words: not useful at all. (Didn’t read the article because it already misuses the AI acronym in the title, indicating it was written by some idiot with nothing to say)

AI is different from LLM, you disingenuous fuck.

Did it get its knickers in a twist?

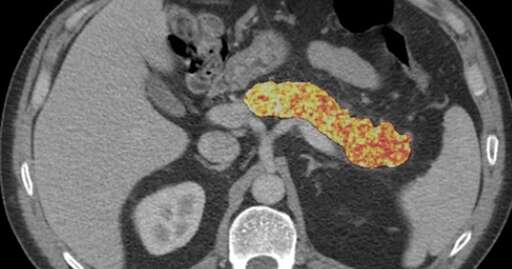

Article actually describes it well enough, how scientists trained a model on data from CT scans of patients who were treated for other conditions some time before being diagnosed with pancreatic cancer.

In my first sentence, I was referring to the combination of adjectives in the question by previous commentor. No one in today’s health care systems is gonna pay preemptive screenings for saving peasant lives like yours or mine.

There are healthcare systems in the world other than the one in the usa

Yes, but all of them are worsening in the interests of profit, in case you weren’t following the news. Germany is just scrapping skin cancer prevention, thanks to our corrupt fucks in government.

Troll.

If course you do - if the cost of treating the patient down the line is going to cost you more. Public health systems have a vested interest in healthier citizens.

The thing is providers of care like to make a profit though, and profit = money = influence on healthcare policies. Healthcare policies are not made solely with cost efficiency in mind, but rather to redistribute wealth from insurance payers to those who provide services. If that means a couple ten thousand of us peasants die a preventable death, then that’s a sacrifice they are willing to make.

Where you live maybe. The NHS is centrally funded through taxation.

That does not contradict anything I said.

It’s not, though and that’s the issue.

False positives are at least as dangerous as false negatives and AI solutions like this have massive problems with over diagnosing.

EDIT: It’s really fun to have a bunch of home-bound tech workers try to talk down to me about the science behind and practice of medicine.

Saw your edit. I’m not a “home-bound tech worker” and I think you’re projecting. What’re your medical qualifications?

False positives are at least as dangerous as false negatives and AI solutions like this have massive problems with over diagnosing.

Absolutely 100% wrong.

In pancreatic ductal adenocarcinoma, a false positive means a follow-up scan. A false negative means death, the 5-year survival is near zero once it’s caught late, but exceeds 80% when caught early.

In the study, the radiologists’ lower false positive rate is achieved by missing 78% of cancers. That’s not a safer trade-off, it’s just a different way to fail. “Overdiagnosis” also requires a disease that might not have harmed the patient, PDA doesn’t have a harmless form. Every missed case is a lost life while every false positive is an extra doctor’s appointment.

This system detects twice as many cancers and was flagging them, on average, 675 days (nearly 2 years!) before clinical detection.

You selected a single pathology which supports your otherwise specious and false argument.

Be better.

They selected the pathology that’s the topic of the post to support their on-topic argument. Be better, indeed.

Really wish people could be better collaborators instead of just being jerks. Kills any value in the conversation.